Computer vision has become extremely good at recognizing what is visible in an image. Objects, patterns and surfaces can be identified with high precision.

But when the technology is applied to buildings, problems often arise.

Not because the models are weak, but because they are missing something fundamental: context.

The problem: a building is not just an object

One of the most overlooked challenges is also one of the most important. How do you actually define a building?

In practice, buildings rarely stand alone. They sit close together, overlap visually and appear differently depending on angle, height and surroundings. For a human, it is intuitive what belongs together. For a model, it is not.

If the model cannot clearly separate one building from another, everything that follows becomes less reliable. Outputs can still be generated, but they are harder to trust and harder to use in practice.

Why computer vision falls short in a building context

Most computer vision models are designed for general image understanding, not structured assets like buildings.

They can recognize what is visible, but they do not understand functional boundaries. They do not know which pixels belong to which building, and therefore not what is actually being analysed.

This becomes a problem as soon as you want to use the output for something concrete. Without a clear definition, the data loses both precision and value.

Our approach: define the building before the analysis

Instead of starting with the image, we start with the building.

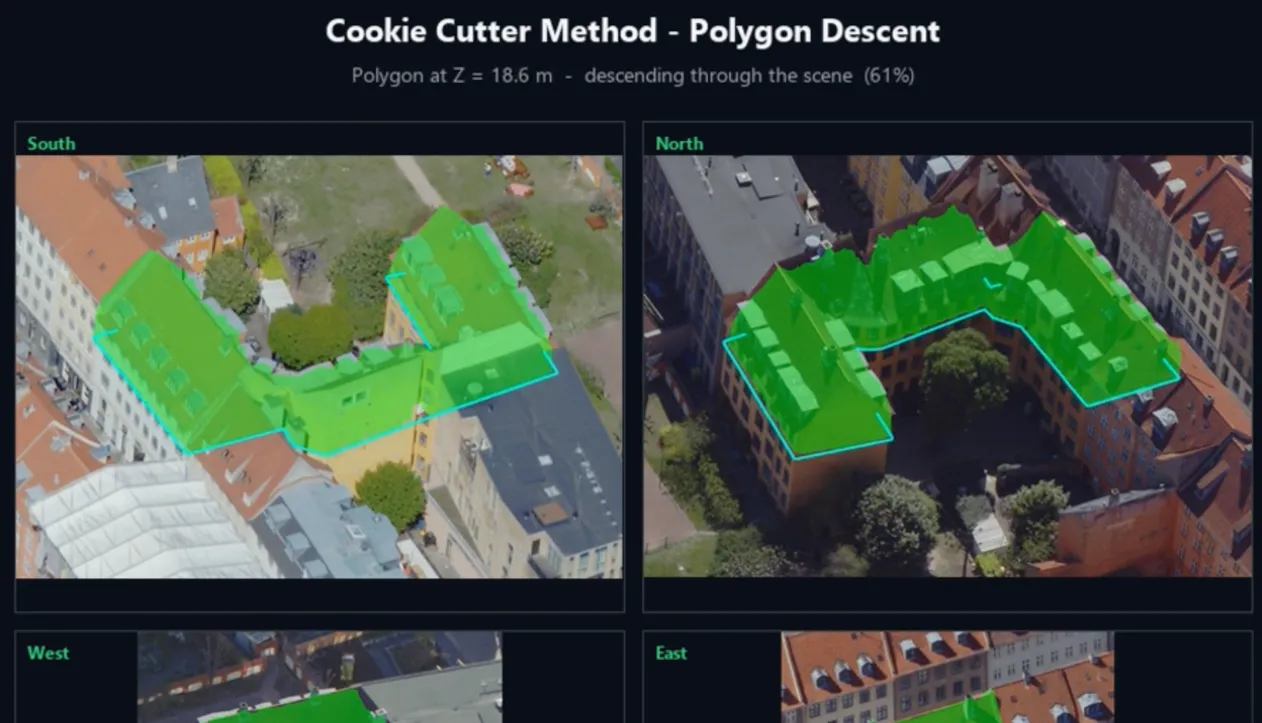

We combine building polygons with height data to isolate the exact structure we want to analyse. Internally, we refer to this as the “cookie cutter method”.

The idea is simple, but critical. By cutting the building out of its surroundings before passing it to the model, we ensure that the input is clear and consistent. The model no longer works on a complex, undefined image, but on a well-defined unit.

What this changes in practice

Once the building is isolated, the quality of the output changes significantly.

It becomes possible to estimate materials, identify visible building components and understand their distribution in a more consistent way. That data can then be structured and used directly in condition assessments and maintenance planning.

The difference is not just technical. It determines whether the data can support decisions or not.

From image recognition to decision support

The goal is not just to recognize what is visible, but to create data that can be used in practice.

By combining domain-specific data with computer vision, we move away from generic image analysis and toward something that can support real operational decisions.

This is where the technology starts to create real value in a building context.

See how it works in practice

In the video, Mikkel Jensen (Co-Founder & CDSO) and Anders Johannesen Berner (Data Engineer) explain how the approach works and what it enables.

Why this matters

Computer vision will play an important role in the future of building data. But if it is to create real value, it needs to reflect how buildings are actually structured and managed.

That starts with something as simple, and as critical, as defining where a building begins.